If you're a growth operator, content leader, or founder (even an aspiring founder) trying to scale content without scaling headcount and mastering AI at the same time, join our private community of global professionals —> AI-Led Growth

Skip to your favorite part :)

You ran the test. You waited three weeks. Only to hear: "It’s inconclusive."

Optimizely published data showing 90% of A/B tests "lose" — meaning the variant shows no statistical difference, so teams stick with the control. What a waste, right? Of time and resources.

The problem isn't with the testing itself. It's what people choose to test and why.

3 questions to ask before you run your next A/B test

Casey Hill (CMO at DoWhatWorks, with access to 27,000+ experiments) sees the same pattern behind every failed testing program:

1. Do you understand where your traffic comes from?

Paid retargeting and dark social require completely different positioning. If you're testing headlines without knowing who's reading them, you're testing blind.

2. Are you testing against a specific problem?

Most teams run a dozen homepage hero variants and see what wins. But the question isn't "which headline performs best." It's "what problem are we solving — and does this copy solve it better?"

Everything should ladder up to that.

3. Are you testing anything meaningful?

This is a gap Casey often sees. Ten copy variants — but none of them introduced new information or provided new direct value. They were rearranging words, not actually changing the message.

The pattern behind the tests that win:

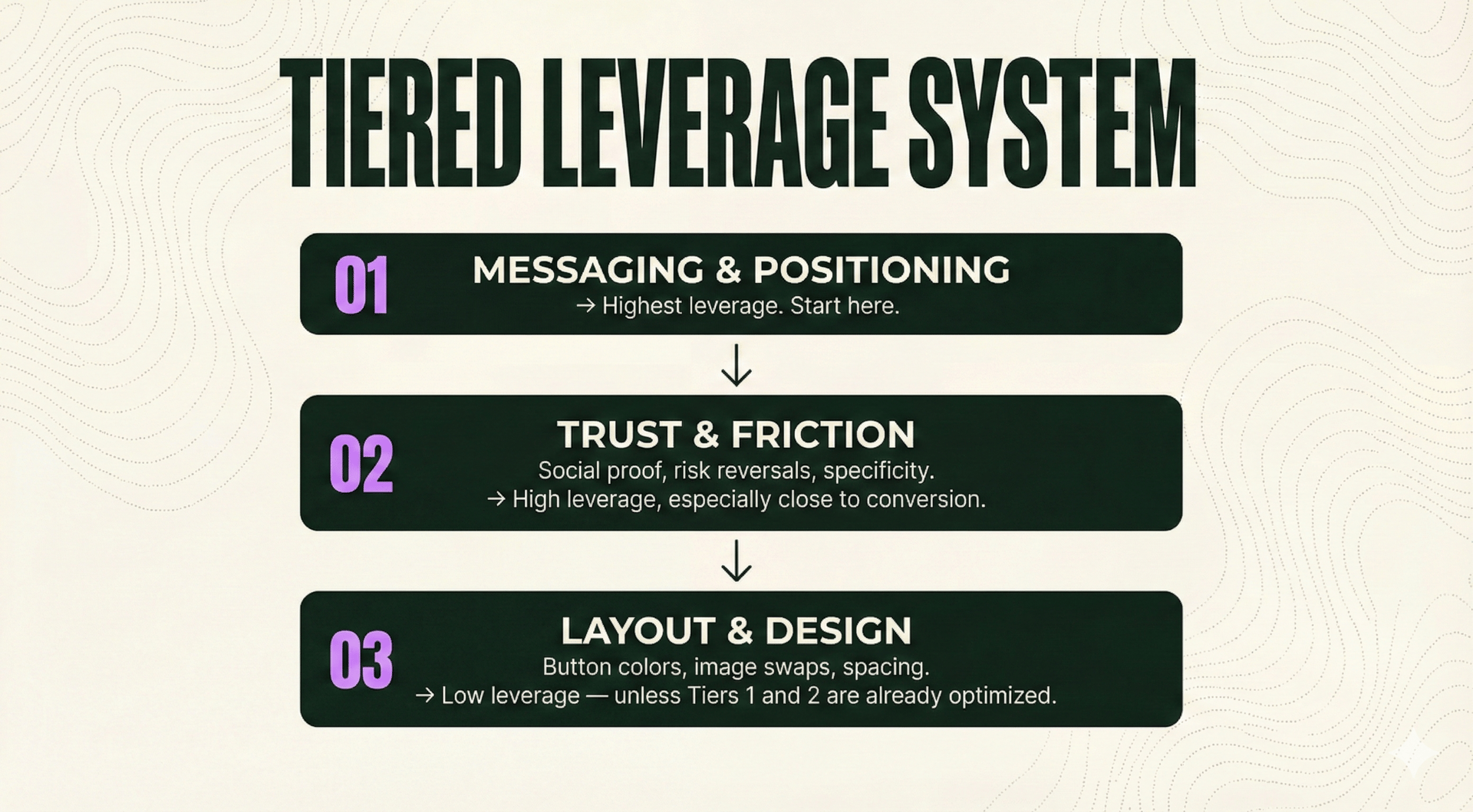

Tier 1: Messaging & positioning What you say and how you frame it. This changes whether someone wants the thing.

IBM tested "Customize your AI" against "Take the Tour." The AI version lost. Why? 53% of consumers now distrust AI-powered claims (Gartner, 2025). The winning CTA removed friction the team didn't know existed.

→ Highest leverage. Start here.

Tier 2: Trust & friction Social proof, risk reversals, specificity. This changes whether someone believes you.

Schwab tested adding a single line of social proof near the CTA. Result: double-digit lift. Same page, same offer — completely different framing.

Hootsuite tested vague benefit language vs. concrete outcomes. The specific version won.

→ High leverage, especially close to conversion.

Tier 3: Layout & design Button colors, image swaps, spacing. This changes whether someone notices.

→ Low leverage — unless Tiers 1 and 2 are already optimized.

The problem is that most teams start at Tier 3 and wonder why 9 out of 10 tests come back inconclusive.

The fix: Test up the hierarchy

Before your next experiment, ask: Am I testing what someone sees — or what they believe?

If your tests keep stalling, reevaluate the tier. The biggest wins are in messaging and trust, not button radius.

Now that you have the framework, here's what comes next.

Stop testing what’s easy. Start testing what matters.

7 A/B tests from Netflix, IBM, and Schwab — plus the 90-minute workshop with Casey. Inside the AI-Led Growth community.

The implementation path for revenue-building inside AI-Led Growth:

→ The Conversion Vault — The tests that actually win. Each includes the hypothesis, variables, results, and the behavioral principle behind why it worked.

→ The 90-minute workshop replay — Casey and Jason's full breakdown, including the 3-part framework for crafting offers your audience can’t ignore.

→ Creating Great AI Outputs course — 15 interactive modules to help you put what you learned into practice.

→ The workshop library — Past sessions with Lovable, Webflow, plus content strategy deep-dives with Jason and me.

The price:

Joining fee is $650. With code CRO, you pay $65 — 90% off.

Code expires January 31st, 2026.

That's less than one wasted A/B test costs in dev time.

Until next time,

Marcel & Jason