🗓️ This week in the AI-Led Growth community: a 2-hour Lovable workshop, a 7-step landing page framework, and 1 free month of Lovable Pro.

Founding members get access to everything.

We've calibrated AI voice guidelines for more than 50 brands in the last year. Think Webflow, Lovable, and Ramp. The difference between content that sounds like them and content that sounds like everyone else? It has almost nothing to do with the AI.

You know the feeling. You go into ChatGPT or Claude, get generic output, and then you're massaging and shaping and wrestling with it trying to get it to a good place. Eventually it becomes counterproductive. You've spent more time editing than you saved.

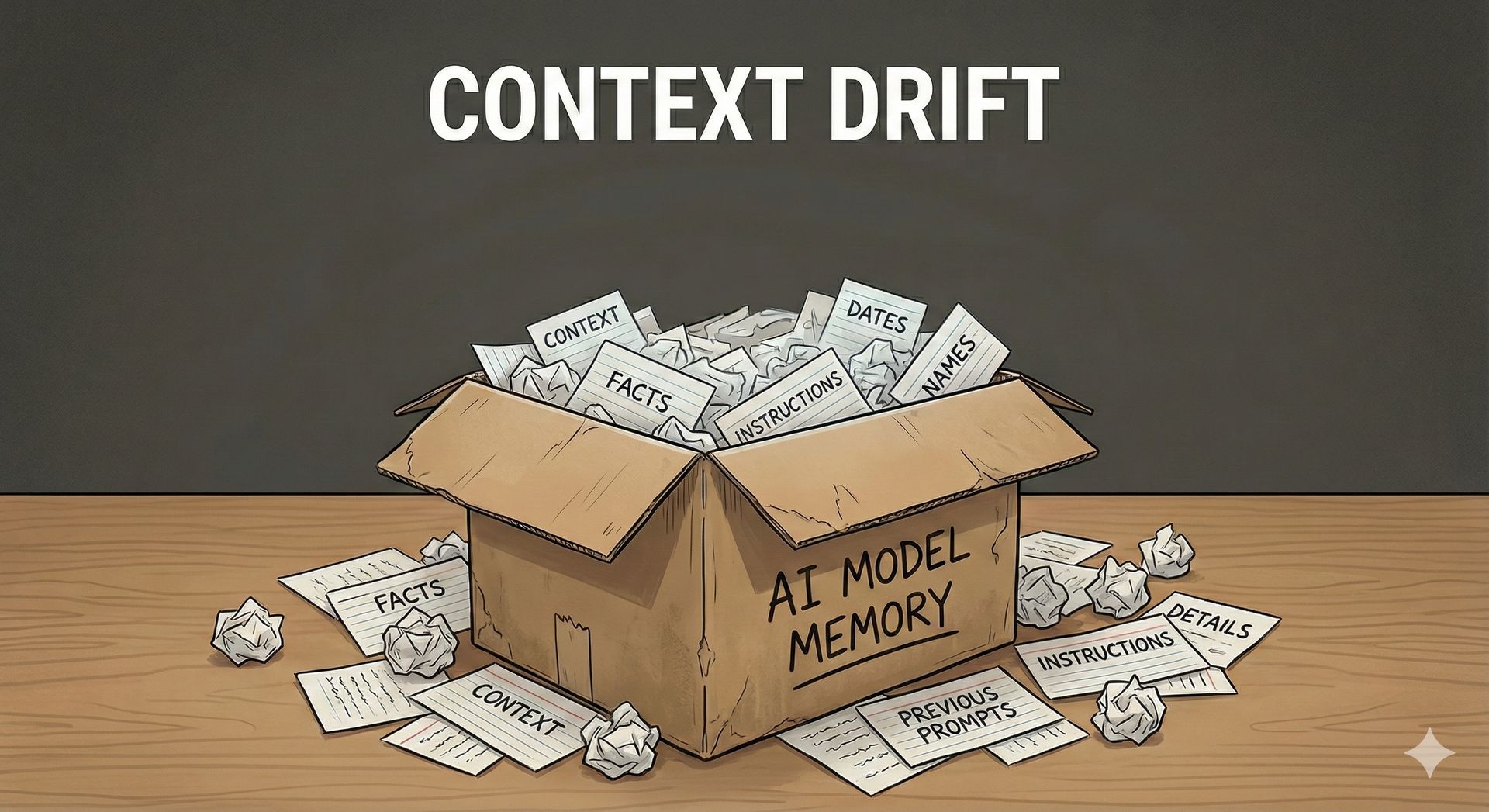

Here's why: Chroma's research found that model performance degrades by 17-35% after just 10 conversation turns. Your instructions are literally getting pushed out of the model's memory. But that's only half the problem.

The other half? Your instructions were too vague to begin with.

True story: A client kept prompting their AI to sound "more conversational." After fifteen rounds of revision, the output started inserting "howdy" and "y'all" into their B2B SaaS content. The AI wasn't broken. It was doing exactly what it was told. "Conversational" has maybe two or three interpretations in the model's training data and none of them were what the client actually meant.

Your AI content sounds generic because your instructions are generic. And your instructions are generic because you haven't actually made the decisions that would make them specific.

We're going to show you why your current docs aren't working, what's technically happening when output quality degrades mid-conversation, and what to build instead.

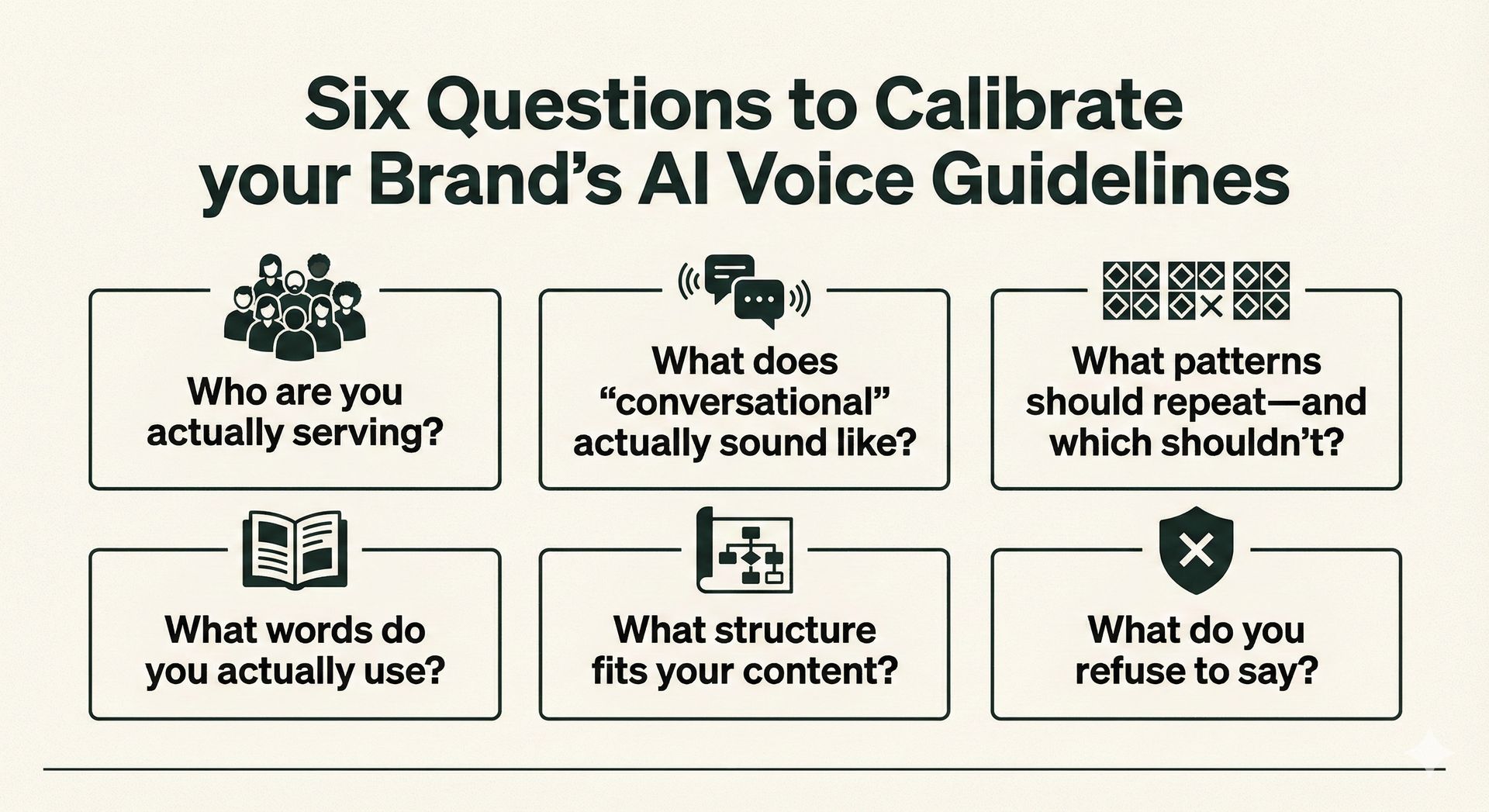

The six questions your brand guidelines don't answer

"Professional but approachable" isn't a prompt.

When we review brand guidelines, the same gaps show up every time. Teams are quick to describe who they want to be. They almost never define who they're actually talking to.

Here's what's usually missing:

Who are you actually serving? Not your TAM. The specific person reading this specific piece. A senior data analyst needs different language than a product manager evaluating tools. Your guidelines probably don't distinguish between them.

What does "conversational" actually sound like? If you can't point to a paragraph that demonstrates it, you haven't decided. You've just picked a word.

What patterns should repeat—and which shouldn't? LLMs follow patterns. If you don't specify which patterns to learn versus which to vary, you'll get the same intro across forty articles.

What words do you actually use? Not "innovative" and "solutions." The specific terminology your team uses when they're not trying to sound like marketing.

What structure fits your content? Do you lead with the answer or build to it? Do you use examples before frameworks or after? These aren't style preferences—they're structural decisions.

What do you refuse to say? The phrases you'd never use, the claims you'd never make, the tone you'd never take. Knowing what's off-limits is as important as knowing what's on-brand.

Brand guidelines are rarely complete. Most companies create them once—maybe over a month—and then they sit forever collecting dust. Meanwhile, everything about how content gets made has changed.

Why your twentieth draft sounds nothing like your first

You've experienced this: The first output from a conversation is decent. By message twenty, it's completely off even though you're using the same instructions.

This is context drift. Think of it like a box. There's only so much the model can hold before things start falling out. And here's the problem: the AI will pretend it still sees what's overflowed. It doesn't. It just fills in the gaps.

This is why training AI through back-and-forth doesn't scale. You can't calibrate your way to consistency inside a single chat window. The context has to live somewhere persistent.

This is why we build four context artifacts for every client—company research, voice guidelines, audience personas, and customer language.

But, here’s one short-term fix: Tell the AI to ask questions when it's unsure. Most people forget LLMs can ask for clarification instead of guessing. And periodically ask it: "Are you still following the writing guidelines?" It forces the model to look back.

But the real fix isn't conversational. It's architectural.

Want to see how we actually build these systems?

We put 10,000 hours of knowledge into the Content Strategy course. It’s our step-by-step process and we’re sharing it inside ALG.

What an artifact looks like vs. what a guideline looks like

Here's the difference between documentation that works for AI and documentation that doesn't:

A guideline says: "Be conversational but professional."

An artifact says: "Use contractions. Start 30% of sentences with 'And,' 'But,' or 'So.' Never use 'leverage,' 'utilize,' or 'solutions.' When explaining a concept, lead with what it does before explaining how it works."

The guideline describes a vibe. The artifact models a pattern.

Teams often say "I don't want m-dashes" because m-dashes look AI-generated. So the AI replaces them with colons. Same structure, same problem—you told it what to avoid but didn't model what you actually want instead.

Without it: The AI gives you its best statistical guess—which is the same guess it gives everyone else. Everyone tells the AI to be "conversational." Everyone gets the same interpretation of conversational.

With it: The AI replicates specific patterns you've demonstrated—sentences, structures, word choices. Output is consistent because the reference material is consistent.

Here's the biggest mistake we see: starting with workflows before setting context. Teams buy tools, build automations, optimize prompts—then wonder why output quality is inconsistent. They're scaling before they've defined what good looks like.

Your differentiator isn't a better prompt. It's you—your specific decisions, your specific examples, your specific patterns. Think of the AI as a junior writer. It doesn't know anything. It's not an expert. You are. The only way it can know who you are and what you know is by asking you.

So tell it to ask. Let it interview you. That's how you surface the knowledge that only you have. Then capture it in an artifact that persists beyond any single chat.

If your content sounds generic, it's because you told the AI to be "conversational" instead of showing it what conversational looks like. If your twentieth draft is worse than your first, it's because context drifts and you haven't built a system to persist it. If your brand guidelines aren't working, it's because they describe vibes instead of modeling patterns.

Fix the inputs. The outputs inevitably follow.

→ Want to see how we actually build these artifacts? The Win AI Search course walks through all four—company research, voice guidelines, audience personas, and customer language. We demonstrate calibration in real time and share the 12 prompts we use for our clients.

Everything's inside the AI-Led Growth community. [Join Now]

Want the full framework?

Inside the community, we're sharing the exact process we use to calibrate brand voice for clients like Webflow and Ramp—including the 12 prompts we use to build context artifacts, the full course on AI Content Strategy, and real before/after examples.

See you inside,

Marcel + Jason